Internal AI Platforms for IT Teams: Multi-Tenant GPU Chargeback in Practice

A case study on how enterprise IT teams built an internal AI platform with transparent GPU cost allocation.

"Finance couldn't trust our GPU numbers—and teams couldn't plan their budgets"

A global enterprise IT department serves 18 internal teams (R&D, marketing, support automation). They launched an internal AI platform to standardize access to GPU resources—but cost visibility was absent, queue times spiked during shared demand, and security concerns made multi-tenant sharing a hard sell.

Three Core Pain Points: No Cost Visibility, Long Queues, and Security Concerns

Pain Point 1: No Cost Visibility by Team or Product

- Finance couldn't attribute spend: GPU consumption was opaque—no breakdown by team, product, or environment. Internal chargeback accuracy <50%; finance treated GPU spend as a black box.

- Teams couldn't plan: R&D, marketing, and support automation had no way to see who drove which share of GPU cost; budget planning was guesswork.

- Quantified impact: Chargeback accuracy <50%; compliance audit effort 4–5 weeks because usage had to be reconstructed manually.

Pain Point 2: Long Queue Times During Shared GPU Demand Spikes

- Queue time P95 18–25 minutes: When multiple teams submitted jobs at once, GPU queue time spiked—production automation and experiments competed blindly.

- No priority or tiering: Whoever submitted first won; production workloads had no guaranteed headroom. GPU utilization 30–38% (underused overall) yet queues were long because capacity wasn't segmented or prioritized.

- Business impact: Teams lost confidence in "shared platform"; some bypassed the platform and procured shadow GPU capacity, worsening cost and security.

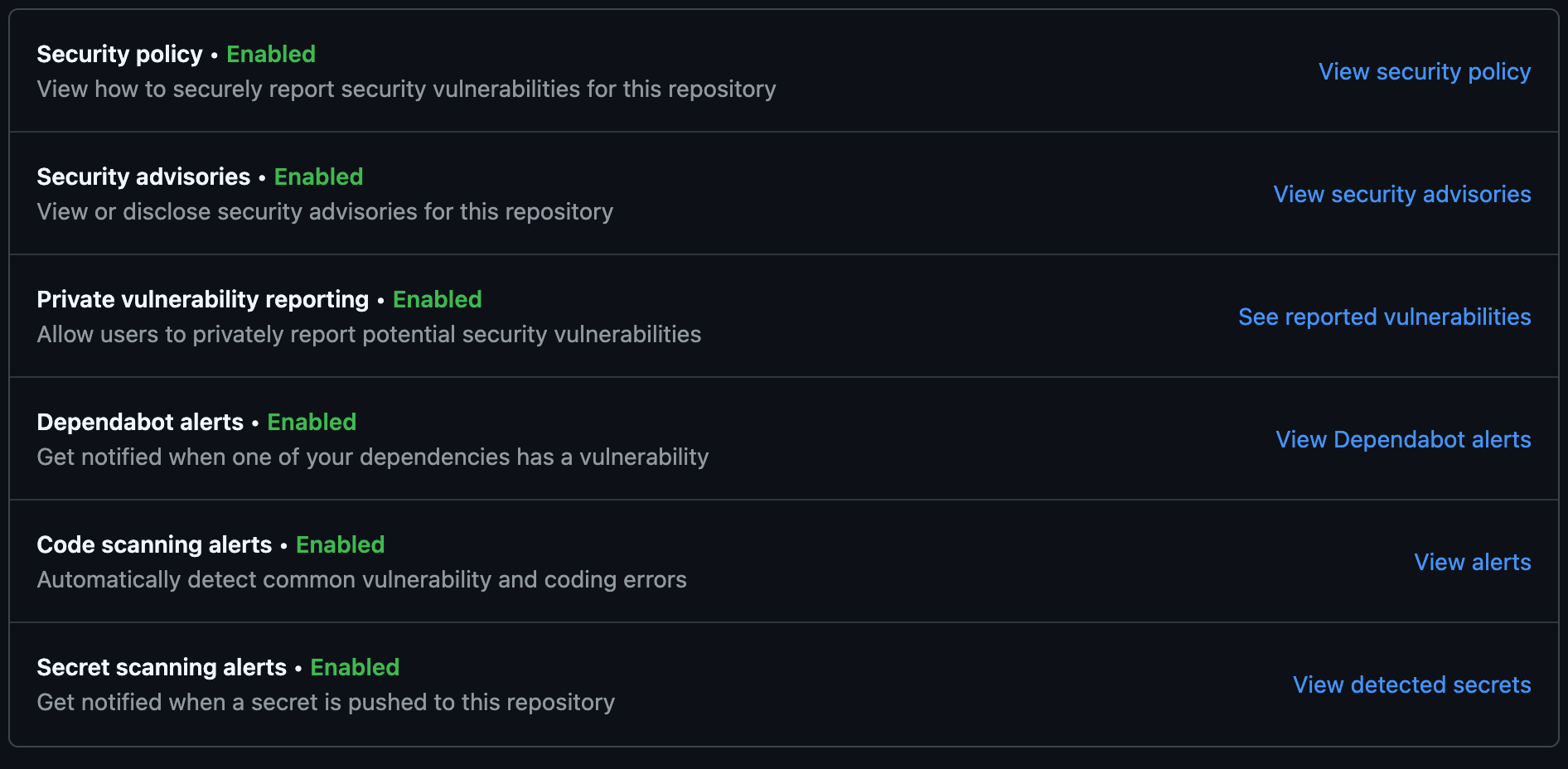

Pain Point 3: Security Concerns When Multiple Teams Shared Infrastructure

- Multi-tenant isolation unclear: Enterprise security and compliance required clear isolation between teams—data and workloads from different departments had to be separable and auditable.

- Traditional approach: Many IT orgs responded by giving each team its own cluster—fragmenting capacity and driving cost up while utilization dropped.

Baseline metrics (before TensorFusion):

| Metric | Baseline |

|---|---|

| GPU queue time P95 | 18–25 min |

| GPU utilization | 30–38% |

| Internal chargeback accuracy | <50% |

| Compliance audit effort | 4–5 weeks |

How TensorFusion Solves These Pain Points

TensorFusion provides multi-tenant isolation with policy-based GPU pools, chargeback tags and usage reporting per team, and priority lanes for production automation—so finance trusts the numbers, teams plan their budgets, and security/compliance get clear isolation and auditability.

Why Pain 1 (No Cost Visibility) Is Solved

- Chargeback tags and usage reporting per team (and optionally by product/environment) give finance and department heads clear attribution—spend is visible and auditable.

- Usage dashboards make "cost" a visible dimension of engineering decisions; chargeback accuracy in this deployment improved from <50% to >95%.

- Compliance audit effort dropped from 4–5 weeks to ~10 days because usage is tracked and reportable by default.

Why Pain 2 (Long Queues) Is Solved

- Priority lanes for production automation: Production workloads get reserved headroom; experiments and batch jobs absorb remaining capacity—no more "whoever submits first wins."

- Policy-based GPU pools segment capacity by workload class and tenant; queue time P95 in this deployment dropped from ~22 min to ~6 min.

- GPU pooling and virtualization raise utilization from ~34% to ~74% while shortening queues—capacity is used more efficiently and allocated by policy, not luck.

Why Pain 3 (Security Concerns) Is Solved

- Multi-tenant isolation with policy-based pools—tasks from different teams are isolated by policy; isolation is programmable and auditable, not "honor system."

- Kubernetes-native integration keeps isolation enforceable at the platform layer; security reviews see clear boundaries and audit trails.

- Chargeback + isolation together give both governance and efficiency—teams share a pool safely, and finance/compliance get the visibility they need.

Results: Before vs After

| Metric | Before | After | Improvement |

|---|---|---|---|

| GPU queue time P95 | 22 min | 6 min | ~73% reduction |

| GPU utilization | 34% | 74% | ~2.2× |

| Chargeback accuracy | <50% | >95% | >90% accuracy |

| Audit effort | 4–5 weeks | 10 days | ~60–75% faster |

| Before TensorFusion | After TensorFusion |

|---|---|

| No cost visibility by team; chargeback <50%; audit 4–5 weeks | Chargeback >95%; audit ~10 days; finance and teams trust the numbers |

| Queue P95 18–25 min; no priority; utilization ~34% | Queue P95 6 min; priority lanes for production; utilization 74% |

| Multi-tenant security a concern; fragmentation by team | Policy-based isolation; shared pool with clear boundaries and auditability |

"Finance finally trusted the numbers. Teams now plan their GPU budgets instead of guessing." — Head of IT Operations

Why TensorFusion Fits Internal AI Platforms

IT teams need governance + efficiency: clear isolation, fair allocation, and transparent cost attribution across departments. TensorFusion delivers multi-tenant isolation (policy-based pools, programmable and auditable), chargeback and usage reporting (by team, product, environment), and priority scheduling (production automation first, experiments and batch absorb rest). GPU pooling and virtualization make it possible to raise utilization, shorten queues, and give finance and compliance the visibility they need—without fragmenting capacity by team or sacrificing security.

Author

Categories

More Posts

Accelerating Radiology AI Triage with Shared GPU Resources

A healthcare case study on improving imaging turnaround time while keeping GPU costs predictable.

Visual Inspection at Scale: Pooling GPU Resources Across Factories

A manufacturing case study on defect detection, throughput, and cost control with TensorFusion.

SMB AI Acceleration: Launching GPU Workloads Without Heavy Capex

A customer-first story on launching GPU workloads without buying a GPU rack—and keeping burn rate under control.

Newsletter

Join the community

Subscribe to our newsletter for the latest news and updates