Visual Inspection at Scale: Pooling GPU Resources Across Factories

A manufacturing case study on defect detection, throughput, and cost control with TensorFusion.

"We bought GPUs for peak launches—then they sat idle the rest of the quarter"

A multi-site manufacturer runs automated visual inspection across 9 factories. Workloads spiked during shift changes and major product launches; the rest of the time, edge GPUs were underused. Throughput bottlenecks appeared when multiple lines launched new SKUs, and training and inference competed for the same GPU resources—slowing both production and model refresh.

Three Core Pain Points: Underused Edge GPUs, Throughput Bottlenecks, and Training vs Inference Contention

Pain Point 1: Edge GPU Resources Underused Outside Shift Peaks

- Peak vs baseline: During shift changes and launch windows, GPUs were saturated; outside those windows, utilization often 25–33%.

- No cross-factory sharing: Each factory sized for its own peak—idle capacity in one site couldn't help another. Resource fragmentation across 9 sites.

- Quantified impact: Average GPU utilization 25–33%; defect detection throughput 220–260 items/min per line, with queues during multi-line launches.

Pain Point 2: Throughput Bottlenecks When Multiple Lines Launched New SKUs

- Multi-line launch reality: When several lines launched new SKUs at once, GPU capacity was exhausted—throughput dropped, queues grew, and quality checks slowed.

- Root cause: Each line competed for the same local GPU pool; no pooling across factories and no priority by line or launch criticality.

- Business impact: Launch windows slipped; quality escape rate 0.9–1.1% when queues backed up and inspection latency increased.

Pain Point 3: Training and Inference Competed for the Same GPUs

- Model refresh vs production: Retraining and fine-tuning ran on the same fleet as live inspection. Training jobs blocked inference; inference spikes delayed model refresh.

- Model refresh cycle ~10 weeks—longer than desired because training had to wait for "quiet" windows that rarely came.

- No tiering: No split between "always-on" inspection capacity and "burst" training capacity.

Baseline metrics (before TensorFusion):

| Metric | Baseline |

|---|---|

| Defect detection throughput | 220–260 items/min |

| GPU utilization | 25–33% |

| Model refresh cycle | 10 weeks |

| Quality escape rate | 0.9–1.1% |

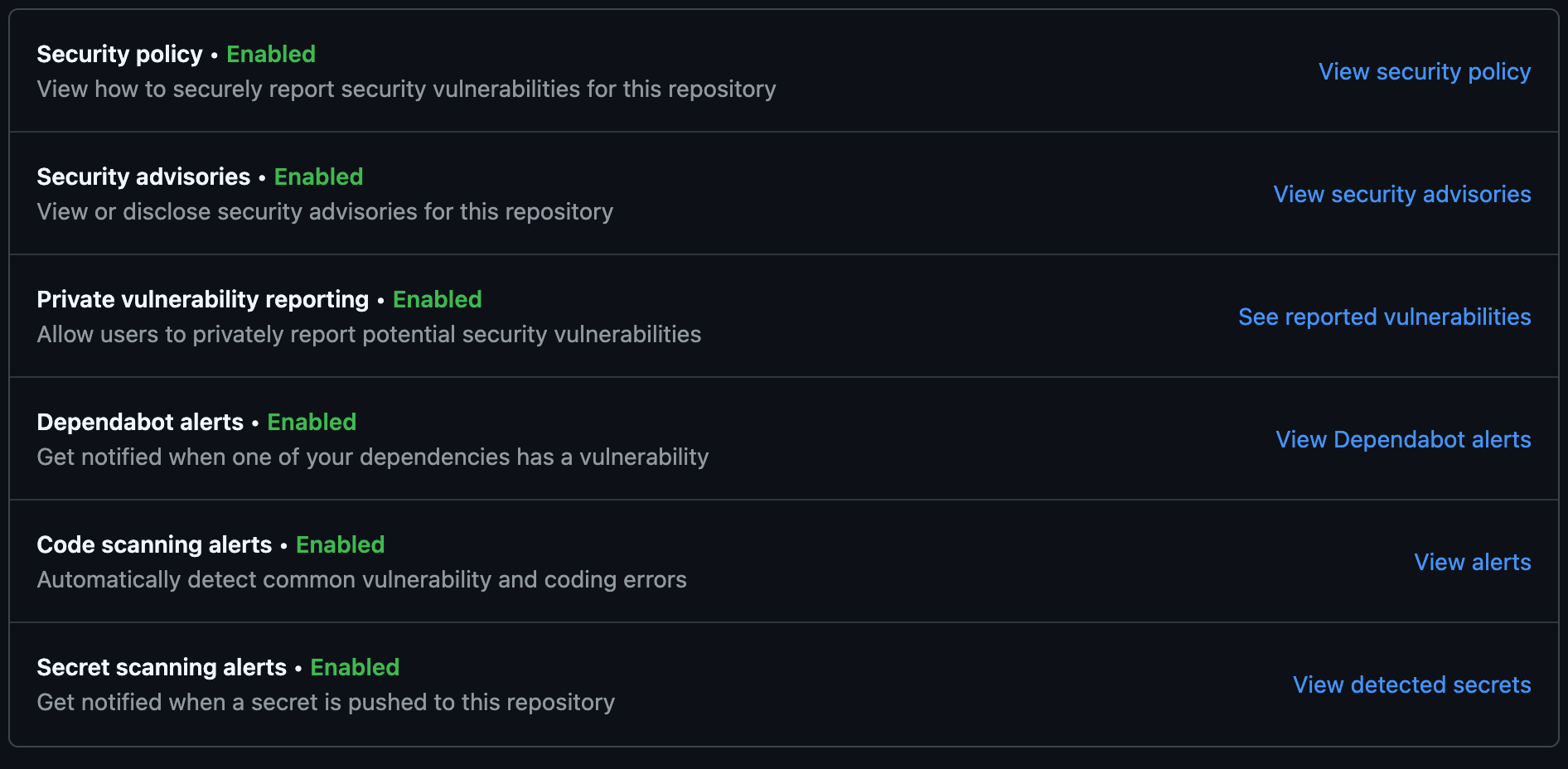

How TensorFusion Solves These Pain Points

TensorFusion provides edge-first inference with pooled GPU resources across factories, a burst training pool that activates only during model retraining windows, and policy-based GPU slicing to prioritize production lines—so throughput scales with demand, training doesn't block production, and spend aligns to actual usage.

Why Pain 1 (Underused Edge GPUs) Is Solved

- Pooled GPU resources across factories: Idle capacity in one site can serve another via TensorFusion's scheduling and (where policy allows) GPU-over-IP—compute moves, data can stay local.

- Usage-aware scaling: Capacity scales up for shift peaks and launch windows, scales down when idle; no more "buy for peak, pay for idle."

- GPU virtualization and oversubscription improve utilization from ~30% to 70%+ in this deployment.

Why Pain 2 (Throughput Bottlenecks) Is Solved

- Policy-based GPU slicing prioritizes production lines by criticality—launch-critical lines get reserved headroom; others share remaining capacity.

- Edge-first inference pool stays warm and stable for live inspection; burst pool absorbs training and heavy batch jobs so inference never waits on training.

- Cross-factory pooling turns 9 local pools into one logical pool—throughput in this deployment increased from ~240 to ~420 items/min per line during multi-line launches.

Why Pain 3 (Training vs Inference Contention) Is Solved

- Burst training pool activates only during model retraining windows; when idle, its capacity is released so it doesn't lock capital or block inference.

- Training and inference tiered: Inference gets "always-on" capacity; training gets elastic capacity that scales on queue pressure. Model refresh cycle in this deployment dropped from 10 weeks to 6 weeks.

- Priority as policy: Production inspection gets priority lanes; training still runs quickly—just not at the cost of production SLOs.

Results: Before vs After

| Metric | Before | After | Improvement |

|---|---|---|---|

| Defect detection throughput | 240 items/min | 420 items/min | ~75% increase |

| GPU utilization | 30% | 72% | ~2.4× |

| Model refresh cycle | 10 weeks | 6 weeks | ~40% faster |

| Quality escape rate | 1.0% | 0.4% | ~60% reduction |

| Before TensorFusion | After TensorFusion |

|---|---|

| Bought GPUs for peak launches; idle the rest of the quarter | Pooled model paid for itself in two quarters; utilization 72% |

| Multi-line launches caused throughput drop and queues | Throughput 420 items/min; priority slicing by line |

| Training and inference fought for same GPUs; 10-week refresh | Tiered pools; refresh 6 weeks; inference never blocked |

"We stopped buying GPUs for peak launches only. The pooled model paid for itself in two quarters." — Manufacturing Systems Director

Why TensorFusion Fits Manufacturing

Factories have predictable shift peaks and bursty training windows. TensorFusion aligns compute to those patterns without overbuying: edge-first inference stays warm for production, burst training pool scales only when needed, and policy-based slicing ensures launch-critical lines get guaranteed headroom. GPU pooling and virtualization (memory isolation, oversubscription) make it possible to raise throughput, shorten model refresh cycles, and lower quality escape—all while keeping spend predictable and tied to actual usage.

Author

Categories

More Posts

FinOps for GPU: Right-Sizing, Karpenter, and Cost Guardrails in Practice

A customer-led guide to making GPU spend predictable with right-sizing, Kubernetes autoscaling, and practical cost guardrails.

Reducing Risk Analytics Latency in Financial Services with Pooled GPU Resources

A financial services case study on accelerating fraud detection and risk scoring while cutting GPU costs by 38%.

Internal AI Platforms for IT Teams: Multi-Tenant GPU Chargeback in Practice

A case study on how enterprise IT teams built an internal AI platform with transparent GPU cost allocation.

Newsletter

Join the community

Subscribe to our newsletter for the latest news and updates