TenClass: Giving Every Learner Their Own AI Lab Workstation

TenClass and TensorFusion co-developed an interactive smart classroom system that delivers instant-on AI lab environments, dramatically improves the teaching experience, and cuts per-learner GPU costs by over 80%.

About TenClass

TenClass is a Shenzhen-based education technology company focused on digital literacy and professional skills training. They serve vocational colleges, training organizations, and educational institutions with hands-on courses in AI image generation, AI video production, and applied machine learning. TenClass built their own open-source virtualization platform, mvisor, to give every learner a real, interactive AI workspace.

As their AI curriculum expanded, TenClass partnered with TensorFusion to co-develop an interactive course authoring and smart classroom system purpose-built for AI education—enabling instructors to rapidly create GPU-accelerated lab modules and giving students instant, on-demand access to fully provisioned environments.

The Challenges Before

1. Students Couldn't Get Into Their Lab Environment

The biggest risk to an AI practicum isn't difficult content—it's students who can't access the hands-on environment when class starts. During peak hours, GPU resources would run out and some students were left watching instead of doing. When an instructor says "open your workspace" and half the class sees a loading spinner, the entire session is compromised.

For any institution offering compute-intensive coursework, reliable lab availability isn't an IT detail—it's a prerequisite for effective instruction.

2. One Student, One GPU—An Unsustainable Model

To prevent cross-student interference (model collisions, VRAM conflicts), the traditional approach was one dedicated GPU per student. But students typically use their GPU for only a fraction of the scheduled time—a 50-minute lab session on a GPU that's provisioned for 24 hours. Effective utilization was below 25%, leaving the vast majority of purchased compute sitting idle.

For universities and nonprofit institutions operating under fixed budgets, this one-to-one allocation model burns through compute budgets without proportional educational return.

3. Spinning Up New Courses Took Too Long

Every time the curriculum team wanted to launch a new AI lab module—say, a diffusion model workshop or a computer vision practicum—operations had to provision fresh GPU instances, install dependencies, and validate configurations. A single environment build routinely took 25–35 minutes, and compatibility issues could stretch that further. With AI tooling evolving rapidly, this lag meant course content often trailed the state of the art.

The Joint Solution: Interactive Course Authoring + Smart Classroom + GPU Pooling

The TenClass–TensorFusion partnership goes beyond "plugging in a compute layer." Together, they built an application-level system on top of TensorFusion's GPU orchestration engine, tailored specifically for educational delivery.

Smart Classroom: Students Get Instant Access

TensorFusion pools all available GPUs and allocates isolated slices on demand. When a student clicks into their lab environment, the system provisions a fully isolated workspace in seconds—no queue, no wait, no "resource unavailable" errors.

Each student's environment is completely sandboxed. There is no cross-contamination of models, data, or GPU memory between sessions. Instructors can focus on teaching rather than troubleshooting infrastructure.

Result: Lab environment availability rose from ~85–90% to 99.9%. Instruction is no longer disrupted by compute shortages.

Interactive Course Authoring: New Modules Ship in Half the Time

Within the co-developed platform, curriculum designers define what software and models a course requires. The platform handles all GPU allocation and environment provisioning automatically—no manual instance setup per course.

Result: New lab environment setup time dropped by ~45%, enabling the curriculum team to keep pace with fast-moving AI tooling.

GPU Pooling: Same Hardware, More Students

A single physical GPU no longer serves just one student. TensorFusion dynamically partitions GPUs so that multiple students share hardware while running in fully isolated environments. When a student finishes, their resources are instantly reclaimed and made available to the next session—achieving true function-level time-division multiplexing of GPU hardware.

Combined with TensorFusion's unique cross-node remote GPU invocation for elastic pooling, the system optimizes scheduling across both scheduled class times and open-ended after-hours practice sessions.

Result: Per-student compute costs dropped by over 80%. Peak-hour GPU utilization rose from under 25% to above 60%.

A Turnkey Solution for Universities and Educational Institutions

This joint platform isn't limited to TenClass's own programs. It's designed to serve universities, community colleges, vocational schools, and nonprofit educational organizations:

Lower the Barrier to Offering AI Courses

Institutions can choose between on-premises deployment (NVIDIA GPU clusters or domestic AI accelerator clusters as a turnkey hardware-software bundle) and cloud-hosted options that require no institutional infrastructure build-out and no dedicated ops team. Plug into your existing LMS and go. AI lab courses go from "we'd like to offer this" to "students can log in today."

Co-Develop AI Curriculum and Lab Content

TensorFusion and its partners work directly with institutions to develop lab content—covering AI image generation, AI video production, foundation model applications, and more. Schools don't just get a platform; they get a complete, deployable AI teaching package with practicum projects and supporting instructional materials.

On-Premises Deployment + Access to Leading Models

Data governance is a top concern for educational institutions. TensorFusion supports full on-premises deployment—models and data stay within the institution's network, meeting FERPA, GDPR, and institutional data-handling requirements.

At the same time, the platform provides access to state-of-the-art AI models from leading international research labs, ensuring that students train on current, industry-relevant tools rather than outdated software.

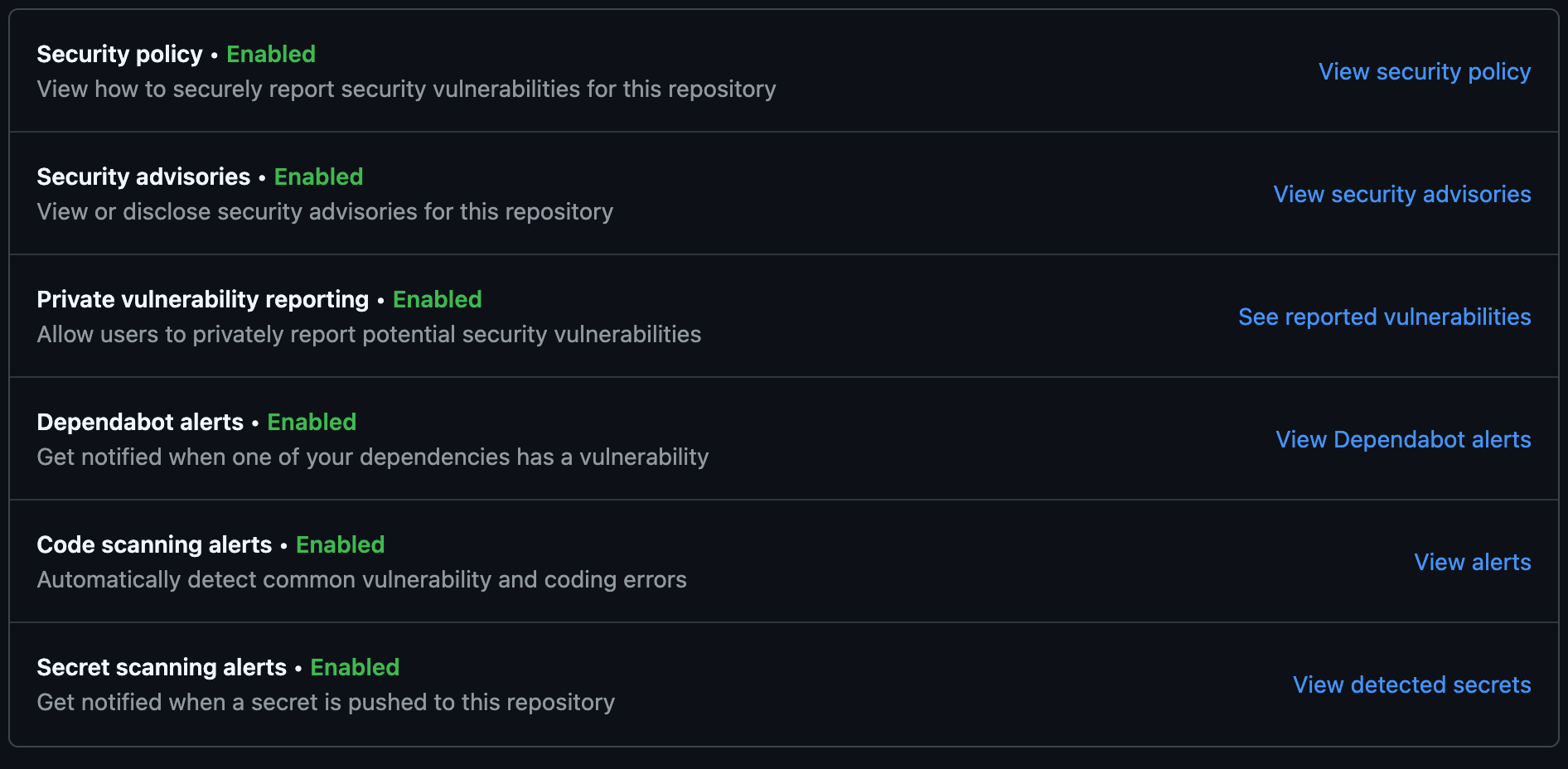

Measured Outcomes

| Metric | Before | After | Change |

|---|---|---|---|

| Lab environment availability | ~85–90% | 99.9% | Significant improvement |

| New lab environment setup time | 25–35 min | 14–19 min | ~45% faster |

| GPU utilization (peak hours) | <25% | 60%+ | Over 2.5× increase |

| Per-student compute cost | 100% (baseline) | <20% | Over 80% reduction |

Common Questions

"Won't multiple students sharing a GPU cause interference?" No. Each student gets a fully isolated runtime environment—separate GPU memory, separate compute. One student's workload cannot see or affect another's, much like individual desks in a physical lab.

"Can this integrate with our existing LMS?" Yes. TenClass integrated the joint solution directly with their own teaching platform. Students log in through the same portal they already use. No system replacement required.

"We don't have a dedicated GPU ops team. Is this realistic?" That's precisely the point. Compute scheduling and environment management are handled by the platform. Your institution focuses on instruction and curriculum—not infrastructure.

"Instructors no longer worry about students getting locked out of their environments, and our curriculum team ships new modules much faster. Better student experience, better teaching outcomes." — TenClass Curriculum Team

Why TenClass Chose TensorFusion

The fundamental tension in AI education is that every student needs their own isolated workspace, but compute resources are always finite. TensorFusion's intelligent scheduling resolves that tension, and the co-developed smart classroom system embeds this capability directly into the daily teaching workflow—easy for instructors, effective for students, practical for institutions.

It was this approach—starting from the pedagogical workflow rather than from a spec sheet—that led TenClass to become TensorFusion's first partner.

Author

Categories

More Posts

Building Always-On GPU Labs for Education Without Always-On Costs

A case study on how a regional education network pooled GPU resources to serve AI courses with predictable performance and 70% lower cost.

Internal AI Platforms for IT Teams: Multi-Tenant GPU Chargeback in Practice

A case study on how enterprise IT teams built an internal AI platform with transparent GPU cost allocation.

FinOps for GPU: Right-Sizing, Karpenter, and Cost Guardrails in Practice

A customer-led guide to making GPU spend predictable with right-sizing, Kubernetes autoscaling, and practical cost guardrails.

Newsletter

Join the community

Subscribe to our newsletter for the latest news and updates