Public Safety Video Analytics at City Scale with Elastic GPU Resources

A public safety case study using pooled GPU resources to reduce response latency and improve utilization across city-wide video systems.

"We had GPUs in every district—but peak hours in one district couldn't use idle capacity in another"

A municipal public security bureau operates a city-wide video analytics system supporting real-time alerts, case review, and cross-district investigations. The system requires data to remain within jurisdiction while enabling rapid response to major incidents. Ops kept asking: "Why can't we use District B's idle GPUs when District A is saturated?"—because data compliance forbade moving video; traditional solutions had no way to share compute without moving data.

Three Core Pain Points: Data Compliance vs Compute, Fragmentation, Peak-Hour Latency

Pain Point 1: Data Compliance vs. Compute Demand Conflict

The core challenge facing public security systems is the conflict between data sovereignty and compute demand:

- Data compliance requirements: Video data must be strictly confined to respective jurisdictions and cannot be transmitted across regions—a hard compliance requirement.

- Fluctuating compute demand: 8,000+ video streams create highly unpredictable inference workloads, with significant load variations across districts and time periods.

- Traditional solution limitations: Traditional approaches require independent GPU deployment in each district, preventing cross-regional resource sharing and causing severe resource waste.

Pain Point 2: Resource Fragmentation Leading to Low Utilization

Independent GPU deployments across districts create "island effects":

- Uneven resource distribution: Some districts have GPUs sitting idle (utilization as low as 20%), while others experience GPU saturation during peak hours with queued tasks.

- No elastic scheduling: Even when adjacent districts have idle GPUs, they cannot be used by other districts, resulting in severe resource fragmentation.

- Cost waste: Each district must provision GPUs for peak demand, but actual average utilization is only 22–30%, leaving substantial resources idle.

Pain Point 3: Peak-Hour Latency Impacting Emergency Response Efficiency

Major incidents and peak hours are when public security systems need the fastest response, yet system performance is at its worst:

- High alert latency: Peak-hour alert P95 latency reaches 5–7 seconds, severely impacting the critical window for emergency response.

- Case review queuing: Historical case review analysis requires 20–30 minutes of queuing, affecting case investigation efficiency.

- Resource competition: Real-time alerts, case reviews, and batch analysis tasks compete for GPU resources simultaneously, lacking priority guarantees.

Pain Point 4: High and Difficult-to-Optimize Costs

- Redundant investment: Independent GPU procurement across districts prevents economies of scale, driving up procurement costs.

- Complex operations: Dispersed GPU resources require independent operations management per district, increasing labor costs.

- Difficult expansion: Adding new districts or scaling requires new procurement and deployment, with long cycles and high costs.

Baseline metrics:

| Metric | Baseline |

|---|---|

| P95 alert latency | 5–7s |

| GPU utilization | 22–30% |

| Case review queue time | 20–30 min |

| Annual GPU cost | 100% (baseline) |

| Cross-district resource utilization | 0% (completely fragmented) |

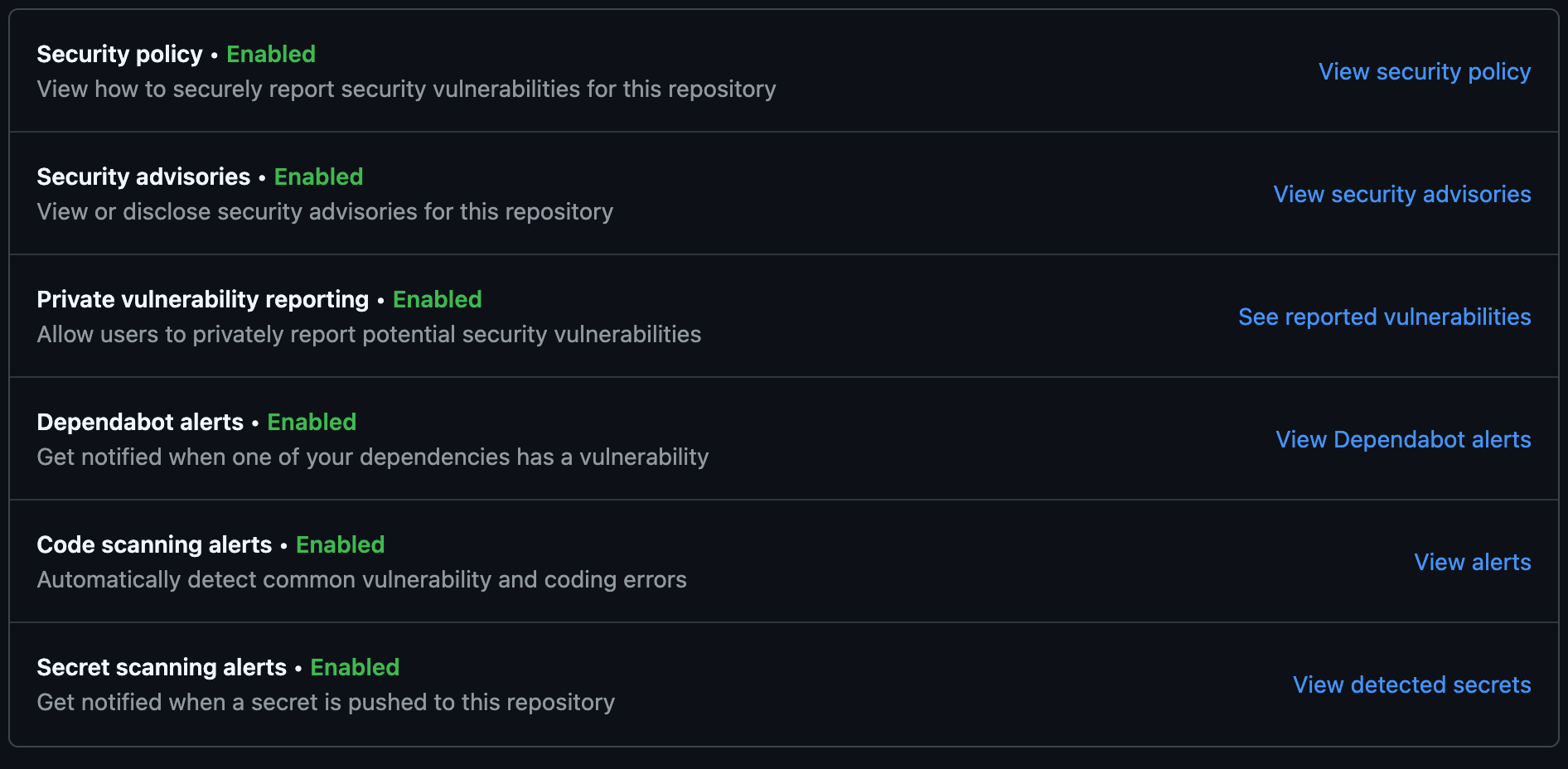

TensorFusion Solution

TensorFusion perfectly addresses the four pain points of public security systems through GPU-over-IP technology and Kubernetes-native scheduling:

Core Technology: Compute Moves, Data Stays

TensorFusion's core innovation is GPU-over-IP technology, achieving true "compute moves, data stays":

- Remote GPU sharing: GPU compute power is shared remotely over IP networks (with InfiniBand support), while video data remains in local districts, with compute scheduled to where data resides.

- Less than 5% performance overhead: Deeply optimized GPU-over-IP technology keeps performance overhead under 5%, fully meeting real-time inference latency requirements.

- Zero-intrusion deployment: Built on Kubernetes-native extensions, requiring no modification to existing application code—just add annotations to integrate.

Solution 1: Cross-District GPU Pooling to Break Resource Silos

- Unified resource pool: GPU resources from all districts are unified into a TensorFusion resource pool, enabling cross-district compute sharing.

- Intelligent scheduling: TensorFusion schedulers monitor load conditions across districts in real time, automatically scheduling idle district GPU compute to high-load districts.

- Resource isolation: GPU virtualization ensures tasks from different districts are completely isolated on shared GPUs, with no interference.

Solution 2: Pipeline Inference to Improve Utilization

- Virtual large GPUs: Multiple idle GPU nodes are combined into virtual large GPUs, supporting pipeline-parallel inference for large models.

- Dynamic partitioning: GPU resources are dynamically partitioned based on task requirements—small tasks use small slices, large tasks use large slices—maximizing resource utilization.

- Oversubscription: Through GPU virtualization and memory tiering, GPU resource oversubscription is supported, further improving utilization.

Solution 3: Priority Guarantees for Critical Tasks

- Local priority strategy: When local districts have urgent tasks, remotely shared GPU compute gracefully exits, prioritizing local tasks.

- Event-level scheduling: For major events and emergencies, TensorFusion supports event-level policy scheduling, automatically elevating priority for related tasks.

- SLA guarantees: Policy-driven scheduling ensures real-time alert tasks always receive sufficient GPU resources, with latency stable within SLA ranges.

Solution 4: Kubernetes-Native for Simplified Operations

- Zero-intrusion integration: Fully implemented as Kubernetes extensions, requiring no modification to existing applications—just add TensorFusion annotations to Pods.

- Unified management: Through the TensorFusion console, GPU resources across all districts are managed uniformly, simplifying operations.

- Auto-scaling: Supports GPU resource-based auto-scaling, automatically adjusting resource allocation based on load.

Implementation Highlights

- Compliance guarantee: Data always remains in local districts, with compute scheduled over the network, fully meeting data compliance requirements.

- Performance improvement: Through GPU pooling and intelligent scheduling, alert latency dropped from 5–7 seconds to 1.5 seconds, a 75% improvement.

- Cost optimization: GPU utilization increased from 26% to 68%, with annual GPU costs reduced by 42%.

- Elastic scaling: When adding new districts or scaling, simply connect to the TensorFusion resource pool—no new hardware procurement needed.

Results: Before vs After

| Metric | Before | After | Improvement |

|---|---|---|---|

| P95 alert latency | 6s | 1.5s | 75% reduction |

| GPU utilization | 26% | 68% | ~2.6× |

| Case review queue time | 25 min | 8 min | ~68% faster |

| Annual GPU cost | 100% | 58% | 42% reduction |

| Cross-district resource utilization | 0% (fragmented) | 35–45% | from 0 to 35%+ |

| Before TensorFusion | After TensorFusion |

|---|---|

| Data had to stay local; GPUs fragmented by district; utilization ~26% | Data stays local; compute pools across districts via GPU-over-IP; utilization 68% |

| Peak-hour alert latency 5–7s; case review queued 20–30 min | Alert P95 1.5s; case review 8 min; priority guarantees for critical tasks |

| Each district sized for peak; annual cost 100%; no cross-district sharing | Cross-district pooling; annual cost 58%; elastic scaling without new procurement |

“We kept data local while compute flowed where it was needed. Latency dropped below 2 seconds during a city-wide event.” — Public Safety IT Lead

Why It Works for Government

Perfect Fit for Government Business Characteristics

The core requirements of public security operations are data sovereignty and rapid response. These seemingly contradictory needs are perfectly resolved by TensorFusion through technological innovation:

-

Data compliance guarantee:

- Video data always remains in local districts, never transmitted across regions

- Through GPU-over-IP technology, only compute flows over the network, data stays completely static

- Meets compliance requirements such as the Data Security Law and Personal Information Protection Law

-

Rapid response capability:

- Cross-district GPU pooling ensures sufficient compute resources during peak hours

- Priority scheduling guarantees ensure critical tasks always execute first

- Alert latency reduced from 5–7 seconds to 1.5 seconds, dramatically improving emergency response efficiency

-

Controllable costs:

- GPU utilization increased 2.6x, from 26% to 68%

- Annual GPU costs reduced by 42%, saving significant fiscal expenditure

- Unified management reduces operational costs and improves management efficiency

-

Technical advancement:

- Kubernetes-native, seamlessly integrated with existing infrastructure

- GPU virtualization technology enables true resource isolation and oversubscription

- GPU-over-IP support with less than 5% performance overhead, meeting real-time inference requirements

Advantages Over Traditional Solutions

| Comparison | Traditional Solution | TensorFusion Solution |

|---|---|---|

| Data compliance | ✅ Data stays in district | ✅ Data stays in district |

| Resource utilization | ❌ 22–30% (fragmented) | ✅ 68% (pooled) |

| Cross-regional sharing | ❌ Not supported | ✅ Supported (compute sharing) |

| Peak-hour latency | ❌ 5–7s | ✅ 1.5s |

| Cost optimization | ❌ Cannot optimize | ✅ Reduced by 42% |

| Operational complexity | ❌ Distributed management | ✅ Unified management |

| Scalability | ❌ Requires new procurement | ✅ Elastic scaling |

TensorFusion achieves cross-regional compute resource sharing while meeting data compliance requirements through technological innovation. It ensures data sovereignty, improves response speed, and significantly reduces costs—making it an ideal solution for government public safety scenarios.

Author

Categories

More Posts

Internal AI Platforms for IT Teams: Multi-Tenant GPU Chargeback in Practice

A case study on how enterprise IT teams built an internal AI platform with transparent GPU cost allocation.

FinOps for GPU: Right-Sizing, Karpenter, and Cost Guardrails in Practice

A customer-led guide to making GPU spend predictable with right-sizing, Kubernetes autoscaling, and practical cost guardrails.

Visual Inspection at Scale: Pooling GPU Resources Across Factories

A manufacturing case study on defect detection, throughput, and cost control with TensorFusion.

Newsletter

Join the community

Subscribe to our newsletter for the latest news and updates